Pesquisa otimiza controle biológico de praga que afeta cultura de soja

Cientistas da Unesp e da Universidade Estadual de Oklahoma verificaram em campo que liberação ideal de vespa que neutraliza o percevejo-marrom deve ser realizada de 30 em 30 metros

Pesquisa demonstra relação entre poluição e riscos cardíacos em moradores de São Paulo

Estudo da USP, publicado na revista Environmental Research, analisou resultado das autópsias de 238 pessoas e dados epidemiológicos; perigo é maior para hipertensos

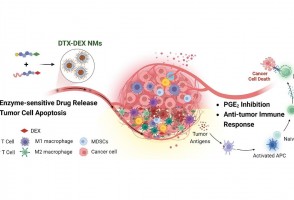

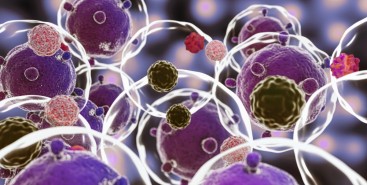

Cientistas do Brasil e da Índia criam tratamento promissor contra tumores sólidos

Em testes com animais, nanopartículas contendo substâncias já aprovadas para uso humano reduziram a inflamação no microambiente biológico em que cânceres desse tipo se instalam e vicejam, facilitando a ação do sistema imune

Estão abertas as inscrições para a 3ª edição do Prêmio Ciência para Todos

Estudantes e educadores da rede pública paulista têm até 3 de junho para se inscrever no edital, que aceita projetos de pesquisa em qualquer área do conhecimento envolvendo métodos da ciência para solucionar problemas

Cientistas brasileiros vão buscar na Romênia os traços dos últimos neandertais

A missão, liderada pelo arqueólogo e antropólogo Walter Neves, objetiva entender como se deu o contato entre os Sapiens e os Neandertais. E por que estes desapareceram

Cocaína é contaminante emergente preocupante na baía de Santos, afirma pesquisador

Droga se acumula não só na água, mas em sedimentos e organismos marinhos e representa alto risco ecológico, apontou o professor da Unifesp Camilo Seabra durante a FAPESP Week Illinois

Escola São Paulo de Ciência Avançada apresenta abordagem inovadora para tratar questões ambientais

Trabalho com foco na transdisciplinaridade busca a participação dos diversos atores sociais para enfrentar as mudanças globais. Tema foi destaque em evento realizado este mês em São Luiz do Paraitinga

OPORTUNIDADES FAPESP

Doutorado em biofísica

EPM-Unifesp

Inscrições até 30/04/2024

TT-3 em imunologia e biologia molecular

FMRP-USP

Inscrições até 30/04/2024

PD em epidemiologia e inteligência artificial

UFMG

Inscrições até 30/04/2024

PD em medicina

EPM-Unifesp

Inscrições até 30/04/2024

PD em dinâmica de fluidos e ciência dos materiais

RCGI/Poli-USP

Inscrições até 30/04/2024

Estão abertas as inscrições para a 3ª edição do Prêmio Ciência para Todos

Cientistas brasileiros vão buscar na Romênia os traços dos últimos neandertais

Pesquisa demonstra relação entre poluição e riscos cardíacos em moradores de São Paulo

Escola São Paulo de Ciência Avançada apresenta abordagem inovadora para tratar questões ambientais

Terceira Conferência FAPESP de 2024 aborda a formação de médicos-cientistas

Chamada com UE apoiará pesquisas em ciência e engenharia de materiais

Livro destaca a relevância das mulheres na construção do pensamento geográfico

Capítulo 7: Expedição termina com mais de 130 espécies de peixes coletadas

Último episódio da série de reportagens faz um balanço sobre a viagem de um grupo de pesquisadores do Museu de Zoologia da USP que, ao longo de duas semanas, percorreu os rios Negro, Preto e Jauaperi, nos Estados do Amazonas e de Roraima

Capítulo 6: Viagem ao rio Negro marcou reencontro após expedições pioneiras nos anos 1990

Uma das artes de pesca utilizadas para coletar peixes-elétricos na Expedição DEGy Rio Negro foi empregada pela primeira vez em larga escala em água doce no projeto Calhamazon, que reuniu pesquisadores do Brasil e dos Estados Unidos entre 1993 e 1996

Capítulo 5: Acidente com raia não atrapalha o ritmo das coletas no Jauaperi

Baixa gravidade da lesão, atendimento médico rápido e cuidados adequados fizeram com que pesquisador pudesse voltar aos trabalhos no mesmo dia em que foi ferroado por peixe peçonhento. Na Amazônia, casos muitas vezes se agravam por carência de assistência especializada

Vídeos

Diário de Campo - Rio Negro

O Legado Suíço-Brasileiro na Amazônia: Arte, Ciência e Sustentabilidade

02/03/2024 a 30/04/2024

Introdução à programação com Python para alunas do ensino médio ou concluintes

30/03/2024 a 27/04/2024

Minicursos para estudantes do ensino médio 2024

06/04/2024 a 08/06/2024

Treinamento de plataformas e bases de dados científicas

09/04/2024 a 30/04/2024

14ª Semana da Computação

22/04/2024 a 26/04/2024

20º Congresso Brasileiro de Língua Portuguesa

25/04/2024 a 27/04/2024

Pulverização de lavouras vai ganhar aeronave especializada

Startup apoiada pelo PIPE-FAPESP está desenvolvendo helicóptero autônomo para pulverizar plantações em terreno íngreme

Teste irá permitir detecção pouco invasiva de micrometástases

Tecnologia pode possibilitar produção de enzimas no Brasil

Pulverização de lavouras vai ganhar aeronave especializada

Startup apoiada pelo PIPE-FAPESP está desenvolvendo helicóptero autônomo para pulverizar plantações em terreno íngreme

Chamadas FAPESP

Comunicar Ciência

Prazo: 22/01

Belmont Forum Climate, Environment, and Health

Prazo: Jan 2024

PIPE Start FAPESP-Sebrae: iniciando a jornada empreendedora de base tecnológica

Prazo: 18/03

Centros de Pesquisa em Inteligência Artificial Aplicada à Saúde

Prazo: 18/03

Fundação Nacional de Ciência da Suíça

Prazo: 22/03

Apoio a pesquisa em citricultura

Prazo: 31/03

Expedições Científicas Amazônia+10

Prazo: 29/04