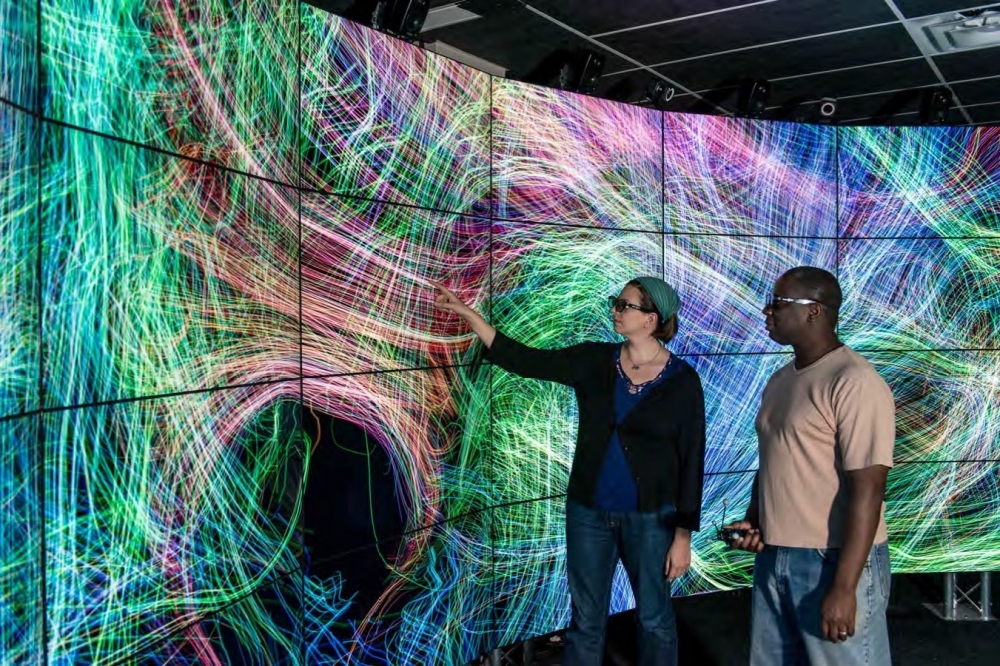

Visualization of the map of neural connections of the brain as part of the NIH Connectome project in the "virtual cave" of the University of Illinois at Chicago (photo: EVL)

Technological concepts depicted in movies such as Minority Report have led to the establishment of a virtual reality environment for the analysis and manipulation of large amounts of images and data.

Technological concepts depicted in movies such as Minority Report have led to the establishment of a virtual reality environment for the analysis and manipulation of large amounts of images and data.

Visualization of the map of neural connections of the brain as part of the NIH Connectome project in the "virtual cave" of the University of Illinois at Chicago (photo: EVL)

By Elton Alisson

Agência FAPESP – When American film director George Lucas wrote the screenplay for the first film in the Star Wars series in 1977, he planned to use computer graphics in one of the major scenes, in which the “Rebel Alliance” is presented with a plan of attack on the Death Star space station.

However, at the time, computer graphics were just beginning to be explored by special effects companies such as Industrial Light & Magic, which was founded by Lucas himself in 1975.

The technological solution for the scene was found at the Electronic Visualization Laboratory (EVL) at the University of Illinois at Chicago (UIC) in the United States. At the time, researchers at the institution were developing a computer graphics system to teach molecular modeling to chemistry students. Using this system, they were able to create the three-dimensional animations Lucas had envisioned for the film.

“The same system that created scientific visualization images was used to do the special effects for the Star Wars movie,” said Maxine Brown, EVL director, in a lecture given during CineGrid Brasil, the international conference held on August 28-29, 2014, in the theater of the School of Medicine at the University of São Paulo (FMUSP).

“Larry Cuba, the artist hired to do the graphics used in the scene, came to the EVL and used our computer graphics hardware and software to create the presentation sequence for the plan of attack on the Death Star shown in the movie,” she noted.

This scientific visualization technology, developed by the institution for scientific purposes, is one of several such technologies that have ended up inspiring fiction and reaching movie screens.

On the other hand, computer visualization concepts that have been imagined and presented for the first time in movies have also led researchers from the institution to develop solutions for scientific purposes.

“Science influences the movies and vice-versa,” Brown said. “Sometimes, people see technologies that were developed in our laboratory that they thought were only found in science fiction movies. Conversely, a lot of what we see at the movies that is still science fiction inspires our scientists.”

The virtual reality environment “Holodeck,” presented for the first time in the Star Trek television series that premiered in 1987, led researchers in 1992 to develop the CAVE Automatic Virtual Environment (CAVE), a virtual reality projection system.

The “virtual cave” is a cube-shaped room in which sounds and images, which visitors can view in three dimensions by wearing stereoscopic glasses, are projected onto the room’s three walls and floor.

The user is able to explore the projected scene by moving around in the cube and using three control buttons to manipulate the three-dimensional objects.

“The CAVE was designed to be a useful tool for scientific visualization, and when it was introduced, they started calling it the Holodeck [from holography],” Brown explained. “It had several applications, such as in a project to virtually reconstruct the Harlem neighborhood [in New York City] during the 1920-1930 period.”

New version

In 2012, the EVL researchers released a new version of the digital cave, CAVE2, which is similar to the “war room” of the 1964 Stanley Kubrick movie Dr. Strangelove. This virtual reality environment is nearly 24 feet in diameter and 8 feet tall, and consists of a single curved wall with more than 70 liquid crystal (LCD) display panels (touch screens).

The room offers users a 320° panoramic view of high-resolution images that are projected on the wall of LCD touch screens at 37 megapixels (millions of pixels) in three dimensions or 74 megapixels in two dimensions.

The wall of screens can be used both to explore virtual reality simulations and to analyze large volumes of images placed side by side.

The images are viewed as a whole and manipulated using a visual data interactive exploration technology developed at the EVL over the past five years, through which users are able to touch the screen (as done with a smartphone) or move the data using gestures by means of a motion sensor, as in the 2002 Steven Spielberg science fiction movie Minority Report.

In the movie, the character played by US actor Tom Cruise uses special gloves and gestures to manipulate images, audio and other data files projected on a clear screen.

“The wall of screens in CAVE2 also allows the combining of images and data, so for example a group of researchers can project graphs relating to a single problem that they are attempting to solve to allow everyone to analyze them at once,” Brown said.

According to Brown, the hybrid virtual reality environment is being used on the EVL’s Batman Project, the name of which alludes to a scene from the 2008 Christopher Nolan movie The Dark Knight in which the character Lucius Fox, played by Morgan Freeman, monitors crimes committed in the fictitious city of Gotham on a curved wall of monitors.

The project is designed to display crime data for Chicago – high-crime areas, for example – to help police and decision makers develop more effective approaches to fighting crime.

“We use Google Maps to show the city of Chicago on the CAVE2 wall, superimposed with crime data,” Brown said. “This has allowed us to see several parts of the city at the same time and make comparisons regarding high-crime areas.”

Scientific applications

According to Brown, CAVE2 has also been used to view sets of complex scientific data, such as data from the Human Connectome Project.

Launched in 2009 by the National Institutes of Health (NIH), the Human Connectome Project is designed to identify and map the neural pathways that underlie adult human brain function.

Using CAVE2, psychiatry researchers from the UIC who are dedicated to the study of depression have analyzed neural network images produced by magnetic resonance equipment in a virtual reality environment.

“CAVE2 allows researchers and medical professionals to view data at a much more detailed level than ever before,” Brown said.

More recently, a group of researchers from NASA’s Astrobiology Science & Technology for Exploring Plants Program (ASTEP) have begun to use the virtual reality environment to assess the outcome of field tests on an unmanned underwater vehicle designed to explore the ice-covered surface of the moon Europa – one of four moons of the planet Jupiter.

Named Endurance, the robot was designed to navigate under the ice, collecting data and samples of microbial life, and to map the underwater environment for the production of three-dimensional maps.

To prepare for the Endurance mission, which is expected to take place after 2020, the researchers conducted a series of field tests in places such as Lake Bonney in Antarctica, which is permanently covered with ice.

The data collected by the robot in Antarctica were transmitted to the EVL, where they were used to generate three-dimensional images, maps and data representations of the lake.

The laboratory researchers then created a tool for the simultaneous visualization of hundreds of high-resolution georeferenced images of the layer of ice that covers the lake, which they can use to study the distribution of sediments trapped in the ice surface.

“By meeting in the virtual reality room, the engineers who designed the robot and the scientists involved in collecting data for the project are able to understand the problems each group has and to collectively study solutions,” Brown said.

Republish

The Agency FAPESP licenses news via Creative Commons (CC-BY-NC-ND) so that they can be republished free of charge and in a simple way by other digital or printed vehicles. Agência FAPESP must be credited as the source of the content being republished and the name of the reporter (if any) must be attributed. Using the HMTL button below allows compliance with these rules, detailed in Digital Republishing Policy FAPESP.